If you have deployed an LLM-based system to production, you almost certainly have some form of observability in place. You are logging prompts and responses. You are tracking token usage and latency. You may be using LangSmith, LangFuse, Weights & Biases, or a similar platform to trace your LLM calls through their execution chains. You can answer questions like: How long did this call take? How many tokens did it consume? What was the prompt? What was the response?

These are useful questions. They are not sufficient questions.

When an AI system makes a consequential decision in production — approves a transaction, denies a claim, triggers an escalation, authorizes a tool call — the question that matters to the business, to the compliance team, and to the customer who was affected is not "what did the LLM output?" It is: why did the system make this decision? Which rules evaluated? Which rules were satisfied? Which were not? What action was authorized? What action was actually executed? What was the complete chain of logic from input to outcome?

LLM observability cannot answer these questions. Decision-level monitoring can. This article explains the gap between them, why it matters, and what teams need to build to close it.

What LLM Observability Actually Measures

LLM observability tools — LangSmith, LangFuse, Weights & Biases, Helicone, Portkey, and others — are designed to make LLM calls visible and debuggable. Their typical instrumentation captures:

Token-level metrics. Input tokens, output tokens, total tokens, cost per call, cost per conversation. These metrics are essential for cost management and capacity planning. They tell you nothing about the quality or correctness of the decision the system made.

Latency metrics. Time to first token, total generation time, end-to-end latency including retrieval and tool calls. These metrics are essential for performance optimization and SLA monitoring. They tell you nothing about whether the system's output was appropriate for the context in which it was generated.

Prompt and response logging. The full text of every prompt sent to the model and every response received. This is the closest LLM observability gets to decision traceability — but it captures the model's input/output, not the system's decision logic. If the system applies rules, constraints, or authorization checks after the model generates a response, those post-generation steps are invisible in the prompt/response log.

Run trees and chain traces. For systems built with LangChain, LlamaIndex, or similar orchestration frameworks, observability tools can trace the sequence of calls through a chain — retrieval, prompt construction, model call, tool call, output parsing. This is architecturally useful for debugging chain execution. It does not capture why a particular tool call was authorized while another was denied, or why a particular output was selected from multiple candidates.

Model version tracking. Which model was called, which version, which provider, which parameters (temperature, max tokens, stop sequences). Essential for reproducibility debugging. Not relevant to understanding the business logic that governed the model's output.

Collectively, LLM observability gives you a thorough picture of how the model performed. It does not give you a picture of how the system decided.

What Decision-Level Monitoring Measures

Decision-level monitoring captures the logic layer that sits between a model's output and the system's action. In a well-governed AI system, the model proposes and the system decides — and the system's decision is governed by rules, constraints, and authorization logic that are distinct from the model's generation.

Decision-level monitoring captures:

Which rules evaluated. For every decision point, the monitoring records which rules were presented with the event, which rules' conditions were satisfied, and which rules' conditions were not satisfied. This is the "reasoning chain" of the deterministic layer — analogous to showing your work in a mathematical proof.

What was authorized vs. what was proposed. The model may propose three actions. The decision layer may authorize one, deny one, and flag one for human review. Decision-level monitoring records all three proposals and the outcome of each authorization evaluation, including the specific rule that caused each deny or escalation.

What was actually executed. Authorization is not the same as execution. A tool call may be authorized by the decision layer but fail during execution due to an API error, a timeout, or a downstream system issue. Decision-level monitoring records the execution outcome alongside the authorization outcome, creating a complete chain from proposal to result.

Rule versions at time of evaluation. Every rule has a version. When a decision trace records which rules evaluated, it also records which version of each rule was active at the time of evaluation. This is critical for incident investigation: if a rule was updated between two decisions, the version record explains why the system produced different outcomes for similar inputs.

Decision metadata. The actor identity (which agent, user, or service triggered the decision), the context (which workflow, which step, which environment), the timestamp, and the decision ID that correlates this decision with upstream and downstream events.

The Gap: Five Questions LLM Observability Cannot Answer

The gap between LLM observability and decision-level monitoring is not theoretical. It manifests as specific questions that production teams need to answer and cannot.

1. "Why was this customer's request denied?" LLM observability can show that the model was called with input X and produced output Y. It cannot show which rule evaluated the request, what condition was not met, and what the customer would have needed to satisfy the condition. Decision-level monitoring records the rule evaluation chain, including the specific condition that caused the denial and the input values that failed to satisfy it.

2. "Did this rule change cause the increase in escalations?" LLM observability can show that escalation volume increased starting at a particular time. It cannot correlate that increase with a specific rule change because it does not track rule versions or rule evaluation outcomes. Decision-level monitoring records the rule version active at each evaluation, making it possible to identify exactly when a rule change began affecting outcomes and which decisions were affected.

3. "What would have happened if this rule had been different?" Counterfactual analysis is essential for rule development and incident investigation. LLM observability provides no mechanism for counterfactual evaluation because it does not capture the rule evaluation layer. Decision-level monitoring, combined with shadow evaluation capabilities, can replay historical events against alternative rule definitions to show what the system would have decided under different governance logic.

4. "Which decisions were made under this rule version before it was updated?" When a rule is discovered to be incorrect and updated, the immediate follow-up question is: which decisions were made under the incorrect rule? LLM observability cannot answer this because it does not track which rule version governed which decision. Decision-level monitoring's per-decision rule version records answer this question directly — the set of affected decisions is a query against the decision log, not a forensic investigation.

5. "Can we demonstrate to a regulator that this decision was made in accordance with our stated policy?" This is the compliance question, and it is the question where the gap between LLM observability and decision-level monitoring is most consequential. A regulator asking this question is not satisfied by a prompt/response log showing what the model said. They need to see the policy (the rule), the evaluation (did the rule's conditions match the input facts), and the outcome (what action was authorized and executed). This chain of evidence exists in decision-level monitoring. It does not exist in LLM observability.

Why Teams With LangSmith or LangFuse Still Have a Monitoring Gap

This is not a criticism of LangSmith, LangFuse, or any other LLM observability platform. These tools are well-designed for their purpose. The monitoring gap exists because their purpose is LLM-level visibility, and decision-level monitoring is a different layer of the stack.

Consider a concrete architecture: an AI system that receives a customer support request, retrieves relevant context from a knowledge base, generates a response using an LLM, evaluates the proposed response against business rules (does the response promise anything beyond the current policy? does it reference internal information that should not be disclosed? does it require manager approval based on the dollar amount involved?), and then sends the approved response or escalates to a human.

LangSmith traces this execution from retrieval through model call through response generation. It captures the retrieval results, the prompt, the model's response, and the tool calls. What it does not capture is the rule evaluation between "model generated a response" and "response was sent to the customer." That rule evaluation — the step that determined whether the response was compliant, whether it required approval, whether it needed modification — is the decision. And it is invisible in the LLM trace.

Gartner's AI TRiSM framework identifies this gap explicitly, noting that organizations need to move beyond model-level monitoring to decision-level accountability. The framework distinguishes between model operations (training, deployment, performance monitoring) and AI governance (decision traceability, policy enforcement, audit readiness) as distinct operational disciplines that require distinct instrumentation.

| Capability | LLM Observability | Decision-Level Monitoring |

|---|---|---|

| Token usage and cost tracking | Yes | Not applicable |

| Latency and performance metrics | Yes | Decision evaluation latency only |

| Prompt/response logging | Yes | Captured as decision input context |

| Chain/run tree tracing | Yes | Decision evaluation chain only |

| Model version tracking | Yes | Not applicable (tracks rule versions) |

| Rule evaluation recording | No | Yes — which rules fired, which did not, why |

| Authorization outcome logging | No | Yes — what was authorized, denied, escalated |

| Execution outcome recording | Partial (tool call success/failure) | Yes — what was executed, result, downstream effects |

| Rule version at time of decision | No | Yes — immutable record per decision |

| Counterfactual analysis | No | Yes — replay events against alternative rules |

| Regulatory evidence production | Insufficient alone | Yes — complete decision chain for any outcome |

The Architecture: Where Decision Monitoring Sits

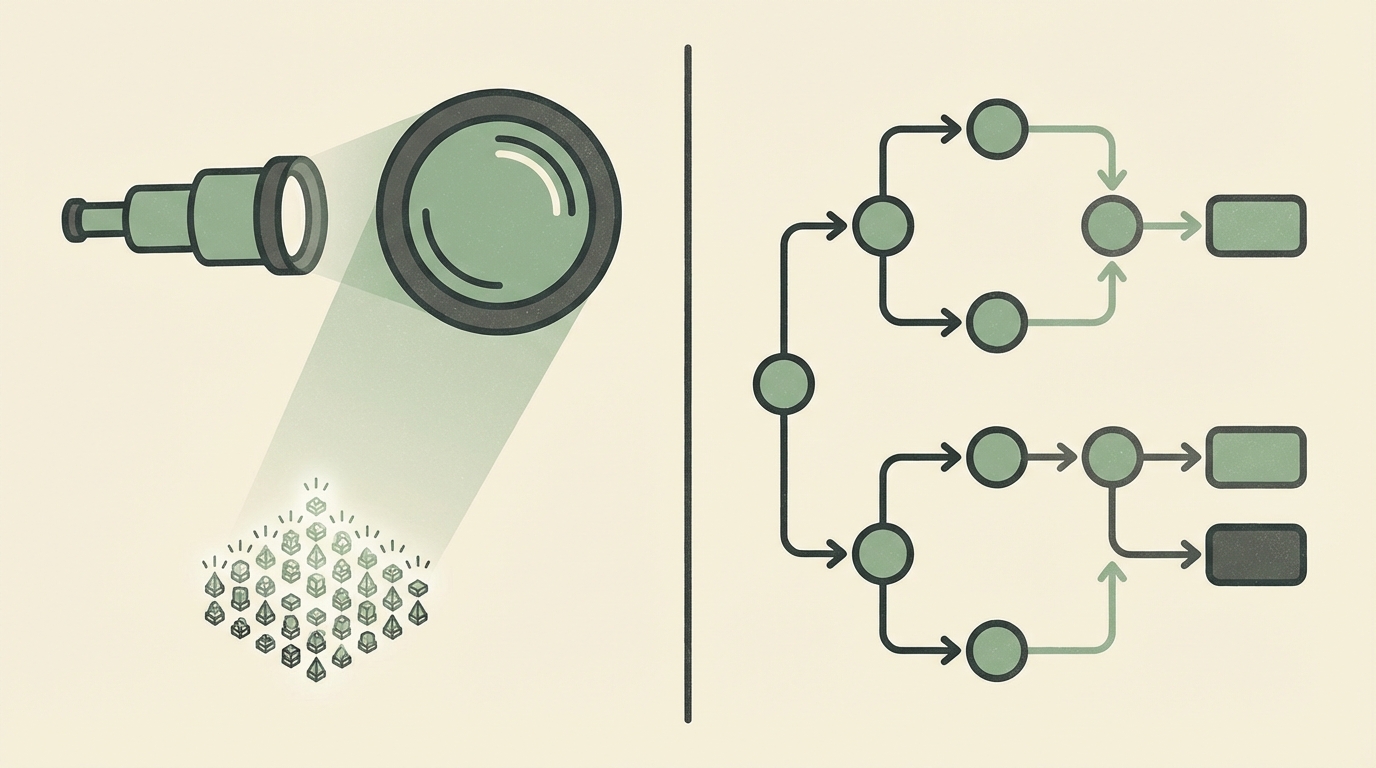

Decision-level monitoring is not a replacement for LLM observability. It is a complementary layer that instruments a different part of the system. In a well-instrumented AI system, both layers are present and both produce traces — but they answer different questions and are consumed by different audiences.

LLM observability instruments the generation layer: the model calls, the retrieval steps, the chain execution. Its primary consumers are ML engineers and platform engineers who need to optimize performance, debug model behavior, and manage costs.

Decision-level monitoring instruments the governance layer: the rule evaluations, the authorization checks, the execution confirmations. Its primary consumers are operations teams, compliance officers, and incident responders who need to understand why the system made a specific decision and whether that decision was correct.

Architecturally, decision monitoring instruments the boundary between "the model produced an output" and "the system took an action." This boundary is where the decision plane operates — the governance layer described in the infrastructure for deterministic AI decisions framework. Every action that crosses this boundary produces a decision trace that records the full evaluation chain.

# Simplified architecture showing both monitoring layers

[User Request]

|

v

[Context Retrieval] -----> LLM Observability: retrieval latency, sources

|

v

[LLM Generation] -------> LLM Observability: tokens, latency, prompt/response

|

v

[Decision Evaluation] ---> Decision Monitoring: rules evaluated, authorization

| outcome, rule versions, actor identity

v

[Action Execution] ------> Decision Monitoring: execution result, downstream

| effects, completion status

v

[Outcome Recorded] ------> Both layers: correlated trace IDs linking

LLM trace to decision traceThe correlation between the two layers is achieved through shared trace identifiers. A single user request produces an LLM trace (captured by LangSmith or equivalent) and a decision trace (captured by the decision monitoring system). Both traces share a correlation ID that allows engineers to navigate from "the model produced this output" to "the system made this decision based on that output" and vice versa.

What a Decision Trace Contains

A decision trace is the atomic unit of decision-level monitoring. Each trace represents a single decision evaluation — one event passing through the governance layer — and contains enough information to reconstruct the decision without external context.

{

"decision_id": "dec_8f2a1b3c",

"timestamp": "2026-04-15T14:23:07.412Z",

"correlation_id": "req_7e4d2a1f",

"actor": {

"agent_id": "support-agent-v3",

"on_behalf_of": "user_12847",

"delegation_scope": "customer_support"

},

"input": {

"event_type": "refund_request",

"amount": 87.50,

"account_tenure_days": 412,

"previous_refunds_90d": 1

},

"rules_evaluated": [

{

"rule_id": "refund_auto_approve",

"rule_version": "v3.2",

"conditions_met": true,

"outcome": "approve"

},

{

"rule_id": "refund_velocity_check",

"rule_version": "v1.1",

"conditions_met": true,

"outcome": "allow"

},

{

"rule_id": "high_value_review_required",

"rule_version": "v2.0",

"conditions_met": false,

"outcome": "not_triggered"

}

],

"authorization": {

"outcome": "approved",

"action": "process_refund",

"constraints": {

"refund_method": "original_payment_method",

"processing_window": "24h"

}

},

"execution": {

"status": "completed",

"executed_at": "2026-04-15T14:23:08.103Z",

"confirmation_id": "ref_99a2b3c4"

}

}This trace answers every question a production team, a compliance officer, or a customer-facing support agent might ask about this decision: What was requested? What rules evaluated? What was the outcome of each rule? What action was authorized? What constraints were applied? Was the action executed successfully? All of this is available as a structured, queryable record — not buried in an LLM prompt/response log that requires manual interpretation.

Practical Implementation: Adding Decision Monitoring to an Existing Stack

For teams that already have LLM observability in place, adding decision-level monitoring does not require replacing the existing instrumentation. It requires adding instrumentation at the governance layer — the point where the system evaluates rules and authorizes actions.

Step 1: Identify your decision points. Map every point in your system where a model's output is evaluated, constrained, or authorized before an action is taken. These are your decision points. Common decision points include: tool call authorization, response approval, escalation triggers, access control checks, and constraint enforcement.

Step 2: Instrument each decision point. At each decision point, emit a decision trace that captures the input, the rules evaluated, the authorization outcome, and the execution result. The trace should be structured (JSON, not free-text logging) and should include the rule versions active at the time of evaluation.

Step 3: Correlate with LLM traces. Propagate a correlation ID from the LLM observability layer to the decision monitoring layer. This allows navigation between the model's output and the system's decision in both directions. If you are using LangSmith, the run ID of the LLM trace can serve as the correlation ID in the decision trace.

Step 4: Build decision-specific dashboards. LLM observability dashboards show model performance metrics. Decision monitoring dashboards show governance metrics: rule evaluation outcomes over time, authorization rates by rule, denial reasons, escalation volumes, rule version changes correlated with outcome shifts. These dashboards serve a different audience (operations and compliance) and answer different questions (governance and accountability) than LLM performance dashboards.

Step 5: Enable decision-level alerting. Alert on governance-relevant signals that LLM observability cannot detect: sudden changes in rule evaluation outcomes (indicating a rule change or an input distribution shift), increases in denial or escalation rates (indicating either a policy issue or an upstream model behavior change), and authorization outcomes that diverge from historical patterns (indicating potential anomalies that require investigation).

The Organizational Divide: Who Consumes What

The reason LLM observability and decision-level monitoring are frequently conflated is that the same team often operates both layers. In smaller organizations, the ML engineering team builds the model, deploys it, monitors it, and is responsible for governance. In this context, it is natural to assume that model monitoring is sufficient for all purposes.

As organizations mature their AI operations, the consumers of each monitoring layer diverge:

ML engineers consume LLM observability to optimize model performance, reduce costs, debug chain execution, and manage model versions. They care about tokens, latency, prompt engineering effectiveness, and model accuracy.

Operations teams consume decision-level monitoring to understand system behavior, investigate incidents, and manage rule changes. They care about which rules are firing, whether outcomes are consistent with expectations, and whether rule changes are producing the intended effects.

Compliance officers consume decision-level monitoring to satisfy audit requirements, respond to regulatory inquiries, and demonstrate that the system's decisions are governed by documented policies. They care about the completeness of decision traces, the immutability of decision records, and the ability to produce evidence for any specific decision.

Customer-facing teams consume decision-level monitoring to answer customer inquiries about specific decisions. When a customer asks "why was my request denied?" the answer comes from the decision trace, not from the LLM trace. The customer does not care what the model's latency was or how many tokens it consumed. They care about which rule denied their request and what they would need to change to satisfy it.

MIT Technology Review's analysis of AI in production has documented this organizational divergence as a consistent pattern: teams that initially treat AI monitoring as a single function eventually discover that model operations and decision governance require distinct instrumentation, distinct dashboards, and distinct ownership.

The Risk of Decision-Blind Observability

Operating an AI system with LLM observability but without decision-level monitoring creates a specific category of risk: the system is visible but not accountable. You can see everything the model does. You cannot see — or prove — why the system decided what it decided.

This risk is manageable when the system's decisions are low-stakes and reversible: a chatbot that recommends articles, a summarization tool that highlights key points, a drafting assistant that generates email templates. For these systems, LLM observability is arguably sufficient because the system's outputs are suggestions, not decisions — a human reviews and acts on the output, and the human's decision is the one that matters.

The risk becomes unmanageable when the system's decisions are consequential and automated: a fraud detection system that blocks transactions, a credit system that denies applications, an agent that executes tool calls on behalf of users, a workflow that escalates or suppresses incidents based on evaluated conditions. For these systems, the inability to answer "why did the system make this decision?" is not a monitoring gap — it is a governance failure.

The practical test is straightforward. Take your most recent production incident involving an AI system's decision. Ask: can you produce, within one hour, a complete record of why the system made that decision — not what the model output was, but which rules evaluated, which conditions were met, and what authorization logic governed the outcome? If the answer is no, you have a decision monitoring gap. LLM observability, no matter how comprehensive, will not close it.

Building Both Layers Into Your Stack

The path forward is not to choose between LLM observability and decision-level monitoring. It is to build both, recognizing that they instrument different layers, serve different consumers, and answer different questions.

Keep your LangSmith, LangFuse, or Weights & Biases instrumentation. It is doing what it is designed to do: making your model layer visible. Add decision-level monitoring at your governance layer — the points where rules evaluate, actions are authorized, and outcomes are recorded. Correlate the two layers through shared trace identifiers so that any investigation can navigate from model behavior to system decision and back.

The result is a system that is both observable and accountable: you can debug model performance and you can explain system decisions. For teams operating in regulated environments, this dual-layer instrumentation is not optional — it is the minimum viable monitoring architecture for AI systems that make consequential decisions in production.