If you are deploying AI agents, robotics, or intelligence systems into environments where outcomes matter, "good enough" model outputs are not the same thing as acceptable decisions. A model can be probabilistic, but your decision authority cannot be. The missing layer for many teams is the infrastructure for deterministic AI decisions: an enforceable decision boundary that is explicit, auditable, and safe to change.

This article lays out what that decision infrastructure is, why it matters in 2026, and how to design it so you can understand, control, and trace every consequential decision.

Why Deterministic AI Decisions Are Suddenly Non-Negotiable

Modern systems increasingly let models do more than summarize or classify. They recommend actions, call tools, move money, change patient workflows, set access policies, steer robots, or trigger escalations. The problem is not that AI is "unreliable," it is that unbounded autonomy plus unclear accountability is a bad fit for:

- Safety-critical environments (robotics, industrial automation, defense, healthcare)

- Regulated decisions (finance, insurance, employment, identity, credit, security)

- High-impact enterprise workflows (privileged access, incident response, procurement)

Meanwhile, compliance expectations are converging toward a consistent theme: you must be able to show what happened, why it happened, and who was responsible.

- The NIST AI Risk Management Framework emphasizes governance, traceability, and accountable processes across the AI lifecycle.

- The EU AI Act introduced a risk-based regulatory regime that increases obligations for high-risk systems (including documentation, logging, and oversight).

- Security teams are also treating agentic systems as a new attack surface, reflected in resources like the OWASP Top 10 for LLM Applications.

In practice, "deterministic" does not mean your model becomes perfectly predictable. It means your system's final decision points are governed by explicit rules and controls, not left to emergent behavior.

What "Deterministic Decision Authority" Actually Means

A useful mental model is to split your system into two layers:

- Generation layer (probabilistic): LLM outputs, retrieval results, perception models, heuristic planners.

- Decision layer (deterministic): explicit business rules, policy checks, approvals, constraints, and enforcement.

Deterministic decision authority is the mechanism that:

- Defines what counts as a valid decision

- Enforces policy at every decision point

- Records a complete decision trace (inputs, rules evaluated, outputs, approvals)

- Controls rollout and change management of those rules

This is not "prompting harder." It is building an infrastructure where the model can propose, but the system decides.

The Core Components of Infrastructure for Deterministic AI Decisions

A decision infrastructure for agents and high-stakes automation typically includes the following building blocks.

| Component | What It Does | Why It Matters for Deterministic Decisions |

|---|---|---|

| Decision gateway (policy enforcement point) | Centralizes decision evaluation before an action executes | Prevents "side door" actions that bypass policy checks |

| Rule and policy management | Stores explicit business rules and constraints (with governance) | Makes decision logic reviewable, testable, and change-controlled |

| Traceability and audit logs | Captures decision inputs, evaluated rules, and final outputs | Enables audits, incident response, and postmortems with evidence |

| Governed workflows | Models multi-step decisions with checkpoints and approvals | Stops "agent drift" across long tasks and tool chains |

| Data and retrieval governance | Controls which sources can be queried, filtered, and used | Reduces leakage, prompt injection impact, and data integrity risk |

| Rollout and change safety | Provides staged releases, validation, and reversibility | Lets you update rules without breaking production decisions |

| Integration completeness reporting | Verifies every integration path is covered by governance | Ensures no tool, API, or service bypasses decision controls |

A common failure mode is to implement only logging, or only approvals, or only RBAC. Determinism emerges when enforcement, traceability, and change control are built into the same path that executes actions.

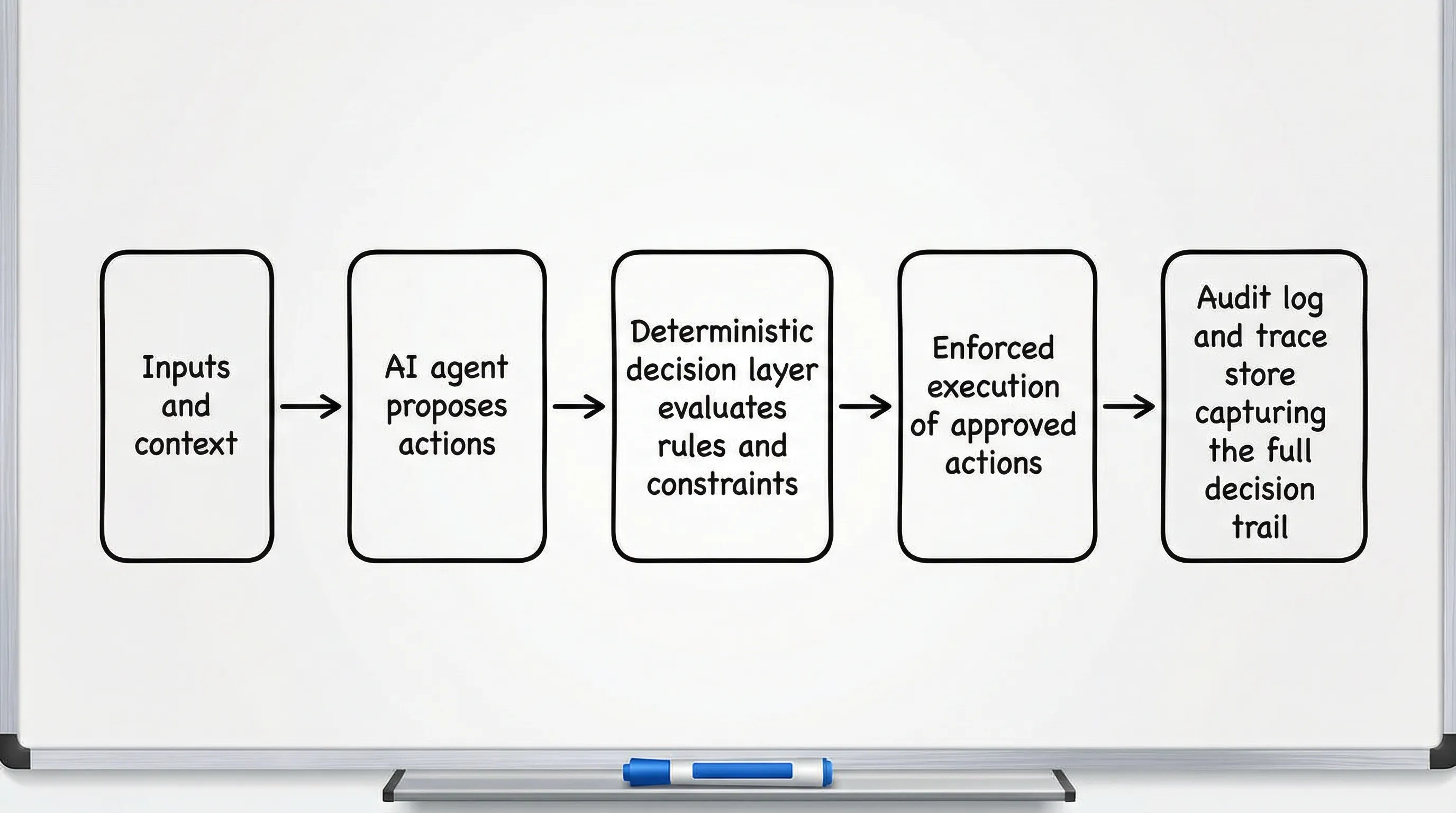

A Reference Architecture: "Propose Then Decide"

The most reliable pattern for agentic systems is:

- Models and tools generate candidates (plans, tool calls, recommended actions).

- The decision infrastructure evaluates those candidates against deterministic rules.

- Only approved actions are executed.

Where Determinism Should Live

Aim for determinism at the action boundary. Examples:

- "Can this agent send an email to an external domain?"

- "Is this purchase within delegated authority and budget?"

- "Does this robot have a safe path and a human-approved zone?"

- "Can this system retrieve from source X for this user and purpose?"

Your model can still draft an email, propose a vendor, or plan a route. The deterministic layer decides whether the action is permitted, under what constraints, and with what audit trail.

Designing Deterministic Decision Points (What to Govern)

Not every step needs governance. Focus on irreversible or high-impact transitions.

1) Tool and Action Invocation

If your agent can call tools, your highest leverage control is a deterministic tool gate:

- Which tools are allowed for this agent identity and context

- Parameter constraints (limits, allowed targets, safe ranges)

- Rate limits and spend limits

- Required approvals for sensitive actions

This reduces the chance that a prompt injection, hallucination, or mis-specified goal becomes a real-world incident.

2) Identity, Authority, and Delegation

Agentic systems often fail because authority is implied rather than explicit. Deterministic decision infrastructure should encode:

- Who the agent is acting on behalf of

- What authority is delegated (scope, time window, value limits)

- How the system handles exceptions (deny, escalate, or "break glass")

3) Retrieval and Data Usage

Retrieval is now part of "decision making," not just search. Govern it like you would govern access to production databases:

- Approved sources and disallowed sources

- Purpose and context restrictions (need-to-know)

- PII handling rules (masking, redaction, retention)

- Citation and provenance requirements for consequential outputs

4) Multi-Step Workflows with Checkpoints

Long-running agent workflows are where small errors compound. Add deterministic checkpoints at:

- State transitions (draft to send, quote to purchase, plan to execute)

- Cross-system boundaries (CRM to payment, ticketing to production)

- Risk thresholds (value, sensitivity, privileged access)

Rule Changes: Safe Rollout Without Breaking Production

In 2026, many teams ship agent updates weekly, but the real risk is often policy drift, not model drift. Your infrastructure should treat rules like production code, even if managed by non-engineers.

A practical rollout approach:

- Shadow evaluation: evaluate new rules alongside the current rules, but do not enforce yet.

- Diff-based review: inspect what decisions would have changed, and why.

- Staged enforcement: start with a small cohort, a single geography, or a low-risk workflow.

- Fast rollback: revert rule sets quickly if unexpected denials or approvals appear.

This is also where "no-code" rule management helps, as long as it is backed by audit logs, access controls, and change history.

What to Log to Make Decisions Audit-Grade

Many systems log events, but cannot answer, "Why did the system allow this?" For audit-grade decision logs, capture the minimum set that makes the decision reproducible and reviewable:

| Log Element | Example | Why It Matters |

|---|---|---|

| Decision ID and timestamp | dec_7f3... | Enables trace and correlation across systems |

| Actor identity | agent ID, user ID, service principal | Establishes responsibility and delegation |

| Inputs and context | request payload, relevant state, environment | Explains what the system knew at decision time |

| Rules evaluated | rule names/versions, outcomes | Shows deterministic logic, not just a result |

| External data provenance | sources queried, versions, access grants | Supports integrity and compliance reviews |

| Decision outcome | allow/deny/allow-with-constraints | The authoritative result |

| Enforcement record | tool call executed, parameters, confirmations | Proves the decision was actually enforced |

Be deliberate about sensitive data in logs. Determinism does not require storing secrets or raw personal data, it requires storing the evidence of evaluation.

Integration Completeness: The Forgotten Control

Even well-designed governance fails if a tool path bypasses it. Integration completeness reporting is the discipline of proving that:

- Every tool invocation routes through the decision gate

- Every workflow checkpoint produces a trace

- Every data source has governed access and provenance

This is especially important when teams add new tools quickly (for example, a new payments API, a new ticketing integration, or a new internal knowledge base).

Common Failure Modes (and How Deterministic Infrastructure Prevents Them)

Prompt Injection Becomes an Action

Without a decision gate, a malicious instruction inside retrieved content can cause:

- Unauthorized data exfiltration

- Tool misuse (sending data externally, altering records)

- Privilege escalation by tricking the agent into "helpful" actions

A deterministic layer forces every action to satisfy explicit constraints, even if the model is convinced otherwise.

"It Worked in Staging" Rule Drift

If rule changes are not rolled out safely, you get unpredictable denials and approvals. Shadow evaluation and staged enforcement reduce this risk.

Missing Trace During Incident Response

In post-incident reviews, the key questions are predictable: What input triggered this, what rules were applied, who approved, and what was executed? Audit-grade decision logs answer those questions without guesswork.

Where Memrail Fits

Memrail is built as decision infrastructure for consequential execution, focusing on deterministic control at the points where AI agents and automated systems make high-stakes choices.

Based on the capabilities Memrail provides, it maps directly to the requirements above:

- Deterministic decision authority to govern what is allowed, denied, or constrained

- Full decision traceability and audit-grade decision logs to support compliance and investigations

- Safe rollout of rule changes so governance can evolve without breaking production

- No-code rule management so policy owners can maintain rules with control

- Governed multi-step workflows and human-facing agent governance for checkpointed execution

- Governed content retrieval and multi-source data governance to control what the system can use

- Integration completeness reporting to reduce bypass risk

If you are moving from "agents as prototypes" to "agents as production actors," this is the layer that turns model output into controlled execution.

Frequently Asked Questions

What is "infrastructure" in the context of deterministic AI decisions?

In this context, infrastructure refers to decision infrastructure: the governance, enforcement, and traceability layer that makes high-stakes decisions explicit, deterministic, and auditable.

Can AI decisions be deterministic if the model is probabilistic?

Yes. You keep the model as a generator of candidates, but you enforce deterministic rules at the decision boundary (for example, tool calls, approvals, and constrained execution).

Is logging enough to govern AI agents?

Logging is necessary but not sufficient. You also need policy enforcement (so the system cannot bypass rules) and safe rule change management (so governance stays stable over time).

Where should I place decision checkpoints in an agent workflow?

Place them at irreversible transitions (send, purchase, deploy, grant access), cross-system boundaries, and when risk thresholds are exceeded (value, sensitivity, privilege).

How do I reduce prompt injection risk in agentic systems?

Combine governed retrieval (source allowlists, provenance) with deterministic action gating (tool constraints and approvals). Treat retrieved content as untrusted input.

Build Deterministic Control Into Your Agent Stack

If your systems are starting to execute real actions, the fastest way to reduce risk is to separate "the model's suggestion" from "the system's decision." Memrail provides deterministic decision authority, traceability, governed workflows, and audit-grade logs so you can control and prove every consequential decision.