Changing a live business rule in a SaaS system is scarier than deploying new code. It should not be — but it is, and the reason reveals something important about how most teams manage their decision logic.

When you deploy new code, you have a pipeline. There is a test suite, a staging environment, a code review, probably a feature flag, and a rollback procedure. The deployment is observable: you can watch error rates, latency, and conversion metrics shift in real time. If something goes wrong, you revert.

When you change a business rule — a pricing tier boundary, a trial-conversion trigger, a dunning sequence threshold, a refund eligibility condition — most SaaS teams ship the change directly to production, watch support tickets for complaints, and roll back manually if things break. The rule has no test suite. It has no staging environment. It has no canary cohort. It goes from "off" to "live for every customer" the moment someone saves the config or pushes the update.

This article introduces safe rollout as a first-class concept for business logic — borrowing from software deployment engineering and adapting it for rules rather than code. The four stages described here are the same stages implemented in production rule management systems, including Memrail's Safe Rollout feature, which makes this pattern accessible without building it from scratch.

Why SaaS Rule Changes Have a High Blast Radius

Business rules in SaaS products are not passive configuration. They govern customer-facing outcomes at the most sensitive moments in the customer relationship: the moment a trial expires, the moment a payment fails, the moment a downgrade is processed, the moment a refund is issued or denied.

A rule change that goes wrong in these moments does not produce a silent error or a server 500 that you catch in logs. It produces a customer who was on day 18 of a 14-day trial and just got locked out. It produces a churn intervention email sent to a customer who paid three minutes ago. It produces a refund denied by a policy threshold that was updated while the support ticket was still open.

The blast radius of a rule change is proportional to two factors: how frequently the rule fires, and how customer-visible its outcomes are. A pricing tier boundary that evaluates on every API call can affect thousands of customers within minutes of a bad change going live. A trial-conversion rule that fires daily can produce a morning of surprised cancellations before anyone notices the misconfiguration.

Research from ZenML's analysis of 1,200 production deployments found that logic and configuration changes — not model updates — were responsible for the majority of production incidents in AI-adjacent systems in 2025. The implication is clear: the risk is in the rules, and the rules need the same deployment discipline as the code.

Borrowing From Software Deployment: Canary, Shadow, Blue-Green Adapted for Rules

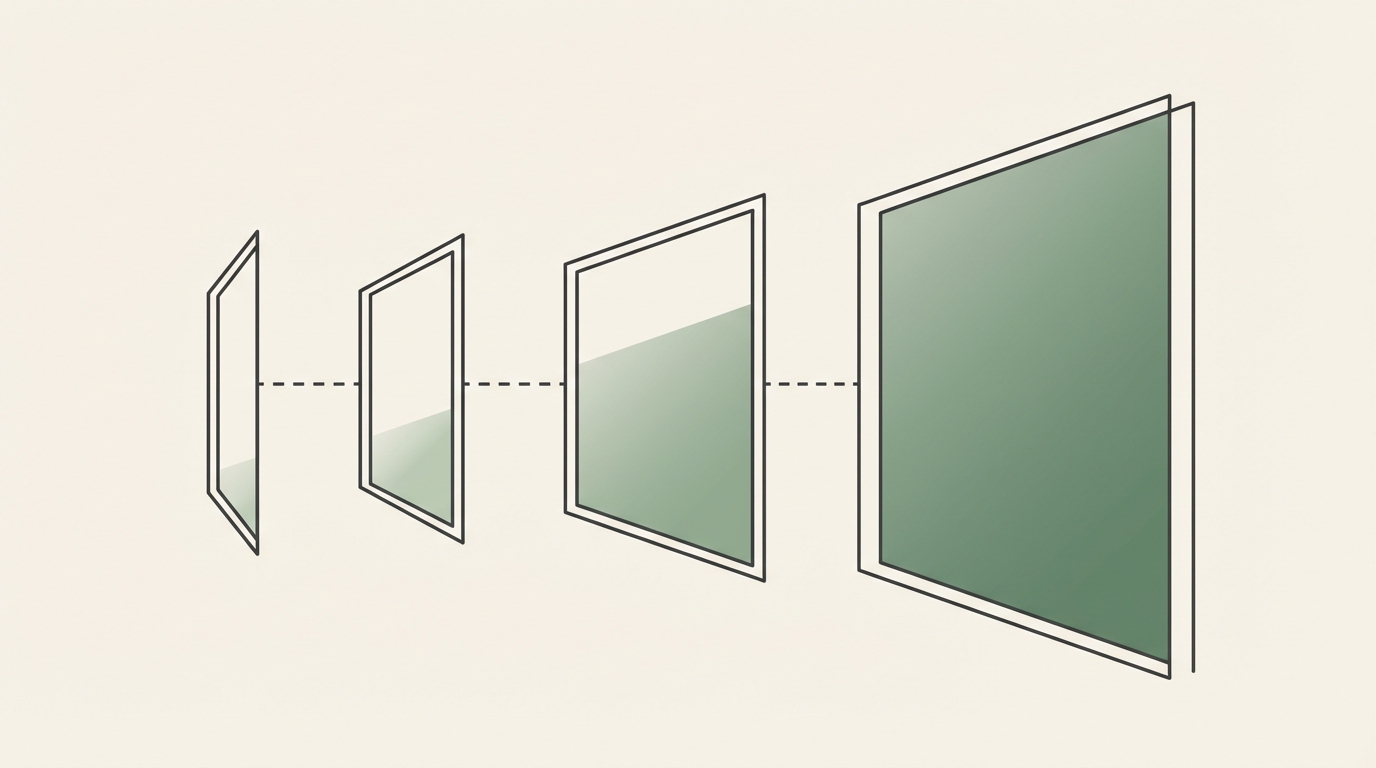

Software engineers solved the "how do we change production behavior safely" problem decades ago. The answer is staged deployment: move from no exposure to full exposure incrementally, measuring at each stage and verifying before proceeding.

The three canonical patterns from software deployment apply to business rules with small adaptations:

Canary deployment routes a small slice of traffic to the new version, keeping the rest on the old version. For rules, this means the new rule applies to 5% of eligible events, and the old rule applies to the remaining 95%. You measure outcomes on both cohorts and promote if the new rule performs as expected.

Shadow mode runs the new version in parallel with the old version, but only the old version's output is acted upon. The new version's output is logged for analysis. For rules, shadow mode means the new rule evaluates against live traffic and produces decisions — but those decisions are recorded, not enforced. You can see exactly what the new rule would have done without any customer ever experiencing it.

Blue-green deployment maintains two complete environments and switches traffic between them instantly. For rules, this translates to maintaining a versioned rule set that can be activated or deactivated atomically — so a rollback is a matter of switching the active version, not reverting a code deploy or hunting through config files.

These three patterns combine into a four-stage protocol specifically designed for SaaS decision rules, which is practical enough to be implemented by any operations or engineering team.

The Four Safe Rollout Stages for Business Rules

Stage 1: Draft — The Rule Exists But Evaluates Nothing

A rule in Draft state is a first-class citizen of your rule management system: it has a name, a version, an owner, and a written definition. But it evaluates no live traffic and produces no decisions. Draft is where you write, review, and refine the rule before it touches production data.

The discipline at the Draft stage is review: does the rule express what the owner intends? Are the conditions correct? Are there edge cases that produce unexpected outcomes? Is the rule's scope properly bounded — does it fire only on the events it should fire on?

Draft stage is also where you write test cases. For every rule, define at least three: an event that should trigger the rule, an event that should not, and a boundary-condition event that sits at the edge of the trigger criteria. These tests run automatically in future stages to confirm the rule behaves consistently.

Stage 2: Shadow — The Rule Evaluates Live Traffic but Does Not Act

When a rule moves to Shadow, it begins evaluating against live production events in real time. Every event that the rule would fire on is presented to the rule; the rule produces a decision — approve, deny, trigger action X. But nothing happens. The decision is written to a shadow log, not acted upon.

Shadow mode is the most valuable stage for rule validation, because it answers the question that staging environments cannot: "what would this rule have done to my actual customers in the last 24 hours?" The shadow log shows you the rule's decisions against real production traffic, including edge cases and unusual account states that your test cases might have missed.

The metric you are watching in Shadow is shadow decision alignment: does the new rule produce the same decisions as the current rule for the vast majority of events? If it does, the change is behaving as expected. If it diverges significantly — particularly on customer-visible events — you investigate before proceeding.

Portkey's research on canary testing for LLM applications emphasizes the importance of shadow evaluation as a pre-canary validation step, noting that teams who skip shadow mode discover their edge cases in the canary stage instead — which means some customers experience the unexpected behavior before it is caught.

Stage 3: Canary — The Rule Acts on a Configurable Traffic Slice

In Canary stage, the new rule goes live — but only for a defined percentage of eligible events. The old rule continues to apply to the remainder. A typical starting slice is 5%: small enough to contain the blast radius of an unexpected behavior, large enough to produce statistically meaningful signal within a reasonable time window.

The canary slice should be selected randomly from the eligible event population, not chosen based on characteristics that might bias the sample. A canary that only runs against new accounts or trial customers will not catch rule behaviors that manifest differently for long-tenure paid customers.

During Canary, you monitor three categories of outcomes: the direct metric the rule is designed to affect (conversion rate, churn reduction, refund volume), downstream effects (support ticket creation, payment failure rate), and customer-reported signals (support contacts referencing the changed behavior). You also continue running your automated test suite against every rule evaluation to confirm no regression.

Canary promotion thresholds should be defined before the canary starts, not discovered during it. Common promotion criteria: 48 hours at canary slice with no divergent customer-visible outcomes, shadow decision alignment above 95%, and no automated test failures.

Stage 4: Active — Full Promotion

When the canary succeeds, the rule promotes to Active: it applies to 100% of eligible events, and the old rule version is retired (or archived for rollback). The promotion should be atomic — the cutover from 5% to 100% should happen as a single versioned state change, not a gradual traffic ramp that leaves both rules active across the full population simultaneously.

The old rule version is not deleted. It is archived with its complete history: the dates it was active, the events it evaluated, the decisions it produced. This archive is your rollback target and your audit record.

Worked Example: Changing a Trial-Conversion Protocol From 14 Days to 21 Days

The scenario: your SaaS product currently triggers trial-to-paid conversion prompts at day 14. Your growth team has data suggesting that users who reach day 21 with sufficient product engagement convert at a higher rate and churn less in the first 90 days. You want to change the trial length, but you have thousands of active trials at various stages — some on day 10, some on day 13 — and you cannot surprise mid-trial customers with an unexpected extension.

Draft Stage

You write the new rule: when a trial reaches day 21 AND the user has completed at least two core product actions, trigger the conversion prompt; if the user reaches day 21 without completing core actions, trigger an engagement intervention instead. You define the conditions precisely, specify which events count as "core product actions," and write test cases against synthetic trial data representing users at day 14, day 21, and day 18.

Shadow Stage

You move the rule to Shadow and run it for seven days against live trial events. The shadow log reveals that approximately 12% of current trials would have reached day 21 with no core product actions under the new rule — a segment the old rule would have converted (or churned) at day 14. You also catch an edge case: trials that started under a legacy promotional code have a different trial-start timestamp format, and the rule was miscounting their days. You fix the condition in Draft and restart the shadow period.

Canary Stage

With the edge case fixed, you move to Canary at 5% of new trial starts. Existing trials remain on the old rule — crucially, you are not applying the new trial length retroactively to anyone mid-trial. The canary population is new trials only, starting from the canary promotion date. You monitor for 14 days (a full trial cycle for the new cohort) and watch conversion rates, 30-day retention, and support ticket volume. The canary cohort shows the conversion rate improvement your growth team predicted. You promote to Active.

The Result

No mid-trial customer was surprised. No support tickets were generated by the rule change itself. The rule change is documented with a complete history: who authored it, when it entered each stage, what the shadow log showed, what the canary metrics were, and when it was promoted. If the growth team's hypothesis turns out to be wrong in month two, the old 14-day rule is in the archive and can be reactivated in under two minutes.

What to Measure at Each Stage: The Protocol Promotion Checklist

| Stage | Primary Metric | Promotion Trigger | Rollback Trigger |

|---|---|---|---|

| Draft → Shadow | Review completeness, test case coverage | Rule passes all test cases; owner has approved | Test case failure; review identifies uncovered edge case |

| Shadow → Canary | Shadow decision alignment vs. current rule | Alignment above threshold (e.g. 95%); unexpected divergences investigated and accepted | Alignment below threshold; divergence on high-sensitivity event types |

| Canary → Active | Customer-visible outcome metrics; support signal; downstream effects | No negative metric movement after minimum observation window; no unexpected customer contacts | Negative metric movement; elevated support contact rate; automated test failure |

The checklist should be defined before the rule enters Shadow, not constructed retroactively. A promotion checklist written after you have seen the shadow results is not a checklist — it is a rationalization.

Rollback Design: How to Reverse a Rule Change in Under Two Minutes

Rollback speed is an operational property of your rule management system, not an outcome of how quickly your team can act in an incident. If reversing a rule change requires a code deploy, a config file edit, or a manual database update, your rollback is measured in tens of minutes at best. In the time it takes to complete that rollback, thousands of events may have been processed under the incorrect rule.

A properly designed rule management system stores rule versions as immutable records. Activating a previous version is a state change — not a code change. The system knows which version was previously active, and can restore it by updating the active pointer. This operation should complete in seconds, not minutes.

Rollback should also be automated where possible. Define metric thresholds that trigger an automatic rollback without human intervention — particularly for high-frequency rules where even five minutes of incorrect behavior at full traffic is unacceptable. This requires that your monitoring is in place before the rule goes to Canary, not assembled after you notice something is wrong.

The test suite you wrote in Draft stage is your fastest rollback signal. If the automated tests run against every Canary evaluation and a test fails, the system can trigger a rollback before the on-call engineer has seen the alert. Teams that implement this pattern, as described in Stripe's engineering practices for safe deployment, report dramatically reduced mean time to recovery for logic-layer incidents.

Making Safe Rollout a First-Class Capability

The pattern described here is implementable from scratch using feature flags, shadow logging, and a versioned rule store. But implementing it from scratch is non-trivial: it requires building the shadow evaluation infrastructure, the version management system, the promotion state machine, and the rollback mechanism — plus the monitoring integrations that make automated rollback possible.

For teams that want this capability without building it from scratch, platforms like Memrail's Safe Rollout implement the draft → shadow → canary → active pattern as a first-class feature for SaaS decision protocols. The rule lifecycle is managed at the platform level, with built-in shadow logging, promotion checklists, and one-click rollback to any previous version.

If you are ready to formalize more of your SaaS decision logic, the SaaS 50 playbook at Memrail provides 50 ready-to-use decision protocols for the most common SaaS workflows — including trial conversion, churn intervention, refund handling, and dunning escalation — each with the five-part anatomy (name, trigger, conditions, action, version) that makes safe rollout practical.

The goal is not to make rule changes harder. It is to make them safe enough that you can make them confidently, frequently, and without the anxiety that currently makes most SaaS teams avoid touching their business logic until something breaks.