Before an AI agent can act in a consequential system — reading a customer record, sending a notification, triggering a billing event, calling an external API — it needs authorization. Not the "did the user log in" kind of authorization, but the fine-grained, context-sensitive kind: is this specific agent, acting on behalf of this specific user, in this specific context, permitted to take this specific action with these specific parameters?

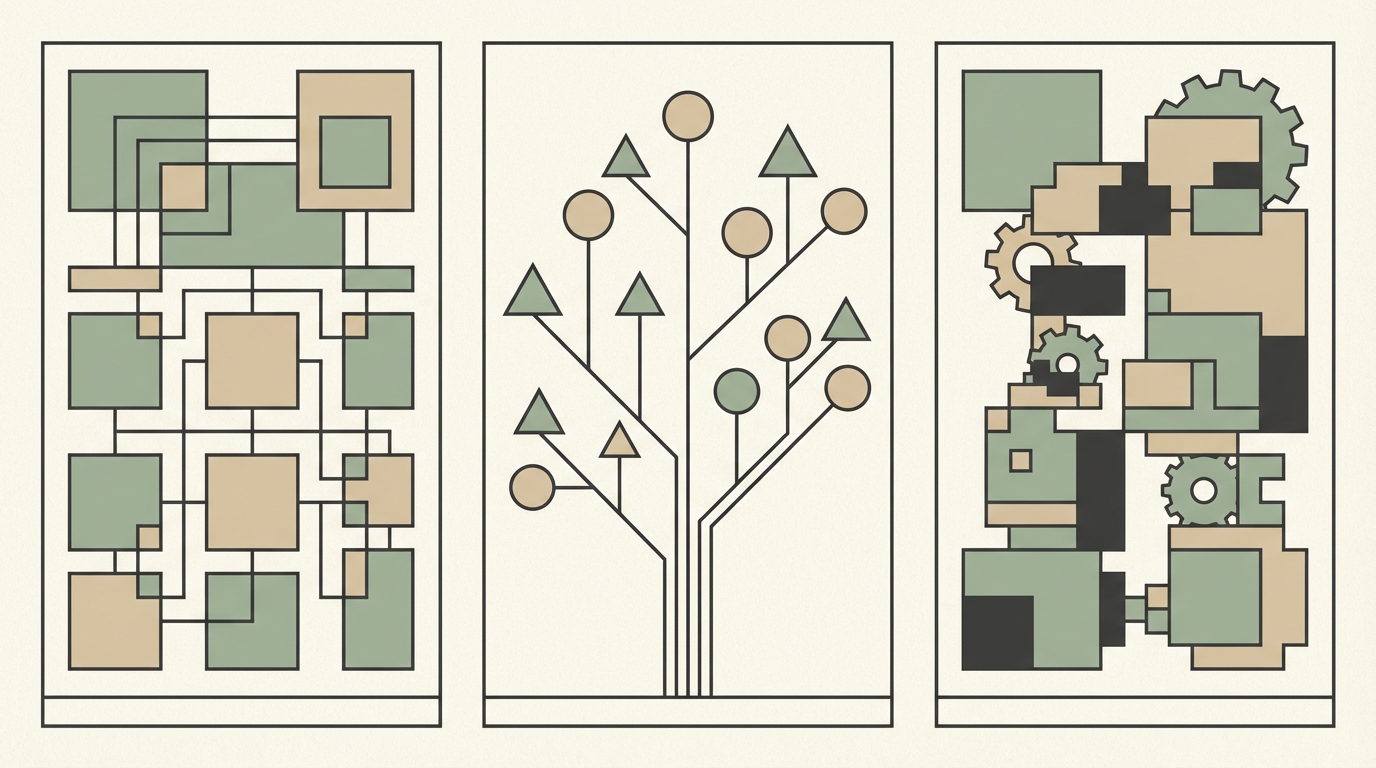

Getting this question right requires a policy engine. And choosing the wrong policy engine — or building one from scratch when you should not — is one of the most reliably expensive architectural mistakes in the AI agent governance stack. The ecosystem in 2026 offers three real options: Open Policy Agent (OPA), AWS Cedar, and custom rules engines. They are not interchangeable. Picking the right one requires understanding what each actually does well, what each does poorly, and what none of them solve on their own.

Why the Policy Engine Question Matters Now

For most of the history of software authorization, the use cases were relatively simple: can this user read this resource? Can this role perform this action? RBAC (role-based access control) and ABAC (attribute-based access control) handled these cases well enough that the policy engine question did not feel urgent.

AI agents change the calculus. An agent is not a user making a discrete request — it is an autonomous system making a sequence of decisions, often across multiple tools and APIs, on behalf of a user whose context it has partially inferred rather than explicitly received. The authorization questions become harder: What is the agent's identity, separate from the user's identity? What actions is the agent permitted to take, and under what contextual conditions? How do you handle delegation — an agent authorized by a user acting within a workflow authorized by an organization?

These questions do not fit cleanly into traditional RBAC. They require a policy engine that can evaluate rich context, express complex conditional logic, and operate at the latency constraints of real-time agent workflows. The policy engine is not a peripheral concern. It is load-bearing infrastructure.

Before evaluating each option, it is also worth noting the broader governance context. A policy engine handles authorization — who can do what. It does not, by itself, handle the audit trail of what was decided and why, the typed fact layer that feeds decisions, or the safe rollout of policy changes. Those belong to the broader decision infrastructure that the policy engine sits inside. The relationship between policy engine and governance stack is addressed at the end of this article.

Open Policy Agent: Ecosystem Breadth With Real Tradeoffs

What OPA Is

Open Policy Agent is a general-purpose policy engine that graduated from the CNCF (Cloud Native Computing Foundation) sandbox in 2021. It uses a purpose-built policy language called Rego. OPA decouples policy decisions from policy enforcement — the application asks OPA "is this allowed?", OPA evaluates the relevant policies against the provided context, and returns a structured decision. The application enforces the decision.

OPA's primary adoption has been in infrastructure-level use cases: Kubernetes admission control, API gateway authorization, Terraform policy validation, and service mesh authorization. It is the de facto standard in the cloud-native infrastructure world, which means it has extensive tooling, integrations, and community knowledge.

Strengths

- Ecosystem breadth: OPA integrates with Kubernetes, Envoy, Kong, AWS, GCP, GitHub Actions, and dozens of other infrastructure components out of the box. If you already run cloud-native infrastructure, OPA is already in your stack.

- Rego's expressivity: Rego is a logic programming language capable of expressing complex, recursive policy logic that is difficult or impossible to represent in simpler policy languages. For organizations with genuinely complex policy requirements spanning multiple dimensions simultaneously, Rego's expressivity is a genuine advantage.

- CNCF governance: OPA has formal project governance, a public roadmap, and a broad contributor base — reducing single-vendor risk in ways that proprietary alternatives cannot match.

- Extensive testing tooling: OPA's policy testing framework is mature. Unit tests for Rego policies are straightforward to write and run in CI pipelines.

Weaknesses

- Rego's learning curve: Rego is not intuitive for engineers who do not have a background in logic programming or Datalog. The language is powerful but reads unlike any other language in a typical SaaS engineering team's stack. Policy review — essential for governance — requires fluency that takes time to build.

- Performance at application layer: OPA was designed for infrastructure-level policy evaluation, not the sub-millisecond latency requirements of application-layer authorization in high-throughput AI agent workflows. OPA performs well for its primary use cases; it performs less well when asked to evaluate complex policies inline in real-time request paths.

- The Styra / Apple uncertainty: Styra, the company founded by OPA's creators and the primary commercial force behind OPA's development, was acquired by Apple in August 2025. The long-term implications for OPA's commercial ecosystem — Styra DAS, enterprise support contracts, the commercial product roadmap — are not yet fully resolved. The open source project under CNCF governance is unaffected by the acquisition in principle, but the uncertainty is real and worth factoring into long-term architectural decisions.

Best Fit for AI Agents

OPA is the right choice when your AI agent authorization needs to integrate tightly with existing OPA-governed infrastructure (Kubernetes workloads, service meshes, API gateways), when your team already has Rego fluency, and when policy complexity genuinely requires Rego's expressivity. It is a poor fit for teams starting from zero on policy infrastructure for application-layer AI agent authorization.

AWS Cedar: Fast, Typed, and Increasingly Hard to Ignore

What Cedar Is

Cedar is an open source policy language and evaluation engine released by AWS in 2023 and used internally by Amazon Verified Permissions. It was designed explicitly for application-level authorization — the fine-grained, per-request "can this principal take this action on this resource given this context" decisions that run in the hot path of real-time applications. Cedar is implemented in Rust, formally verified using automated reasoning tools, and designed to be readable by non-engineers.

Strengths

- Evaluation speed: Cedar's Rust implementation delivers authorization decisions at sub-millisecond latency in benchmarks. Permit.io's benchmark research found Cedar running 40–60x faster than OPA on comparable authorization workloads. For AI agents making multiple authorization decisions per workflow step, this latency difference is not academic — it determines whether authorization checks are feasible inline or must be batched and cached.

- Typed policy language: Cedar policies are evaluated against explicitly typed entities — Principals (who is acting), Actions (what they want to do), Resources (what they want to do it to), and Context (the conditions under which the action is requested). The type system catches policy errors at write time rather than evaluation time. This is a significant advantage for teams that need to review and approve policy changes without running them against live traffic first.

- Readable syntax: Cedar policies read like English-structured logic. A Cedar policy that says "permit a customer-success-agent to read CRM records belonging to accounts the agent's user is assigned to" is intelligible to a product manager or a compliance reviewer, not just to the engineer who wrote it. This readability has operational implications: governance processes that require policy review by non-technical stakeholders are significantly more practical with Cedar than with Rego.

- Formal verification: Cedar's authorization semantics are formally verified using Dafny and other automated reasoning tools. This means the language's behavior is provable — a policy that Cedar says permits an action will always permit it; a policy that Cedar says denies will always deny. For AI governance contexts where auditability and predictability are requirements, formal verification is a genuine differentiator.

- Cedar v4.x improvements: Cedar's 4.x release series added policy templates, improved schema validation, and enhanced tooling for policy set management — features that address the operational complexity of managing authorization policy at scale.

Weaknesses

- AWS ecosystem bias: Cedar's commercial implementation is Amazon Verified Permissions, an AWS managed service. The open source project is genuinely independent, but the ecosystem of tooling, integrations, and reference architectures is more AWS-centric than OPA's broadly cloud-native ecosystem. Teams committed to multi-cloud or non-AWS infrastructure will find less community material to draw on.

- Younger community: Cedar's community is smaller than OPA's. Stack Overflow coverage, blog posts, and third-party tooling are thinner. Teams choosing Cedar should expect to do more primary source reading from the official documentation and the Cedar GitHub repository.

- Intentional expressivity constraints: Cedar's type system and evaluation model make certain complex policy patterns difficult to express. This is intentional — Cedar trades raw expressivity for predictability and performance — but it means teams with genuinely unusual policy requirements may find Cedar's constraints frustrating.

Best Fit for AI Agents

Cedar is the right choice for teams building application-layer AI agent authorization from scratch in 2026. Its speed, readability, and type safety align well with the requirements of AI agent governance: authorization decisions need to run fast, policies need to be reviewable by non-engineers, and the behavior of the authorization layer needs to be predictable and formally verifiable.

Custom Rules Engines: The One Valid Case and the Three Traps

When Custom Makes Sense

There is exactly one scenario where a custom rules engine is the right architectural choice for AI agent authorization: when your policy domain is so specific, so performance-critical, and so poorly served by existing policy languages that the cost of building and maintaining a custom engine is genuinely lower than the cost of adapting Cedar or OPA to your requirements.

This is a narrow case. It applies to a small number of organizations with genuine domain-specific requirements: real-time trading systems with microsecond authorization requirements, specialized regulatory domains with policy languages defined by external bodies, or systems where the authorization logic is inseparable from domain-specific data structures that no generic policy engine handles efficiently.

The Three Traps

Most teams that build custom rules engines do so for one of three bad reasons:

- Trap 1 — "OPA seemed complex": Rego's learning curve causes teams to conclude that all policy engines are too complex, and that building something simpler in-house will be faster. It usually is faster — for the first six months. After that, the team discovers that simple rules engines become complex rules engines as requirements grow, and that the custom engine lacks the testing infrastructure, formal verification, and community knowledge of its open source alternatives.

- Trap 2 — "We already have if/else logic in the codebase": Teams with existing authorization logic embedded in application code sometimes conclude that formalizing it into a custom rules engine is more practical than migrating to OPA or Cedar. In most cases, this perpetuates the core problem: the authorization logic remains tightly coupled to the application, untestable in isolation, and difficult to review or change safely.

- Trap 3 — "We need full control": The argument that a custom engine gives full control is true but underspecified. Full control also means full responsibility: for security, for correctness, for performance, and for maintenance. The teams that cite control as the primary reason for custom engines rarely account for the full lifecycle cost of what they are building.

Decision Matrix: Choosing Between OPA, Cedar, and Custom

| Factor | Favors OPA | Favors Cedar | Favors Custom |

|---|---|---|---|

| Team size and policy engineering capacity | Larger teams with dedicated platform engineering; existing Rego fluency | Smaller teams; mixed technical / non-technical policy reviewers | Large teams with very specific domain requirements and capacity to maintain |

| Policy complexity | Complex, recursive policies spanning many dimensions simultaneously | Rich but well-structured ABAC / RBAC with contextual conditions | Policies inseparable from highly domain-specific data structures |

| Performance SLA | Millisecond range acceptable; infrastructure-layer use cases | Sub-millisecond required; inline application-layer authorization | Microsecond range required with highly specific data access patterns |

| Cloud dependency tolerance | Multi-cloud or non-AWS preferred; broad cloud-native ecosystem | AWS primary or comfortable with open source Cedar independent of AWS services | Air-gapped or highly constrained deployment environments |

| Compliance audit requirements | Strong; mature OPA audit tooling and policy-as-code documentation patterns | Strong; formal verification provides stronger correctness guarantees | Depends entirely on what the team builds into the custom engine |

| Change velocity | Moderate; Rego review cycles require policy engineering capacity | High; Cedar's readable syntax enables faster review by broader stakeholder group | Low; custom engines accumulate technical debt that slows change velocity over time |

The Honest 2026 Recommendation

Most teams building AI agent authorization systems in 2026 should start with Cedar.

The performance characteristics are well-matched to application-layer authorization in real-time agent workflows. The readable syntax enables policy review by product managers, compliance teams, and legal counsel — stakeholders who need to be able to read and approve authorization policies but who cannot be expected to learn Rego. The formal verification properties provide stronger correctness guarantees than OPA's evaluation model. And Cedar v4.x has matured the operational tooling to a point where managing policy sets at scale is tractable for teams without dedicated policy engineering specialization.

The main case for choosing OPA instead of Cedar is existing investment: if your infrastructure already runs on OPA — Kubernetes admission control, service mesh authorization, API gateway policies — and your team has Rego fluency, extending that investment to AI agent authorization is lower-cost than introducing a second policy engine paradigm. The ecosystem coherence argument is real, and it should not be dismissed.

The Styra / Apple acquisition uncertainty is worth monitoring but should not, by itself, drive teams away from OPA. The CNCF-governed open source project is structurally independent of Styra's commercial product decisions. Teams that are not dependent on Styra's commercial offerings are minimally exposed to the acquisition's consequences.

Custom rules engines are the right answer for a small number of organizations with genuine domain-specific requirements. They are the wrong answer for any team that is building one primarily because getting started with Cedar seemed too involved.

What Neither Solves: The Broader Governance Stack

It is important to be precise about what a policy engine does and does not do in the context of AI agent governance.

A policy engine answers authorization questions: is this action permitted? It does not produce a decision trace — an audit record of which rules were evaluated, with which input facts, at which version, producing which outcome. It does not provide a safe rollout mechanism for policy changes. It does not maintain the typed fact layer that feeds policy evaluation. And it does not handle the observability questions — why did the authorization decision produce this outcome when the inputs were these values — that governance teams need for incident response.

The policy engine is one component in a broader decision architecture. Its role is authorization enforcement at the action boundary. The other components — fact management, decision tracing, policy change management, observability — must be built alongside it for the governance stack to be complete.

For a full description of how the policy engine fits into the broader governance architecture, read our article on The Agent Governance Stack: Four Layers Every Enterprise Needs Before Going to Production. For the architectural pattern that situates the policy engine within the decision plane, see Separating Logic from Models: Why Your AI System Needs a Decision Plane.

Summary: Three Engines, One Decision

Choosing a policy engine for AI agent authorization is an architectural decision with long-term consequences. The options are not equivalent:

- OPA is the right choice if you have existing investment in OPA-governed infrastructure and team Rego fluency. Its ecosystem breadth is unmatched; its application-layer performance and the Styra acquisition uncertainty are real tradeoffs.

- Cedar is the right choice for most teams starting AI agent authorization from scratch in 2026. Its speed, readability, and formal verification properties align well with the requirements of governed AI agent systems at application layer. The younger community is a genuine tradeoff; the performance and correctness properties are genuine advantages.

- Custom is the right choice for a small number of organizations with genuine domain-specific requirements. It is a trap for most teams that build one to avoid learning an existing tool.

The policy engine question has a defensible answer. Make the choice deliberately, make it in the context of your broader governance architecture, and treat the policy engine as what it is: load-bearing infrastructure for AI agent deployments, not a configuration detail to be revisited later.